Abstract

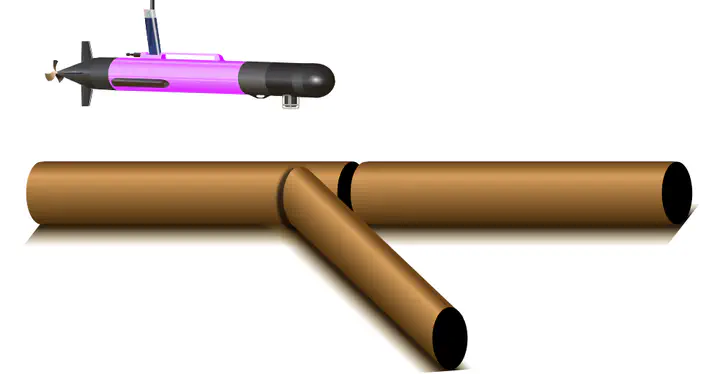

With the advent of AI-driven applications, testing faces new challenges when it comes to the integration of software with AI components. We present a novel testing approach to tackle the integration of software with symbolic AI in the form of knowledge graphs (KG). As the KG is expected to change during the run- and lifetime of the software, we must ensure the robustness of the system w.r.t. changes in the KG. Starting with a single KG, we mutate its content and test the unchanged software with the original test oracle. To address the specific challenges of KGs, we introduce two additional concepts. First, as generic mutations on single triples are too fine-grained to reliably generate a KG describing a different, consistent KG, we introduce domain-specific mutation operators that manipulate subgraphs in a domain-adherent way. Second, we need to specify those parts of the knowledge graph that the software relies on for correctness. We introduce the notion of a robustness mask to describe shapes in the graph to which the mutant must conform. We evaluate our approach on two software applications from the robotic and simulation domain that tightly integrate with their respective KG, as well as three OWL reasoners, where we found several previously unknown bugs.